Have you experienced an unusual fall in enquiries since the end of May? Check your website statistics – a loss in traffic could have affected your new business pipeline. This could be linked to Google’s announcement of their latest algorithm update, Panda 4.0, on the 19th of May 2014. If you’re still struggling to regain your rankings, have a look at our 10-step guide on the actions you can take to improve your website.

What Is Google Panda 4.0?

Google’s search engine finds results to users’ queries using algorithms: a written logic created by their developers to return pages that are as relevant and useful as possible to users, by increasing their ‘rank’ relative to other pages. Google works tirelessly to improve this, and often names their updates after animals! Panda 4.0 is the 26th major update made to their algorithm. Google also rolls out monthly refreshes of their Panda algorithm, but this update is more than just a data refresh: it changes the way Google identifies and penalises websites with low quality content.

It helps fight content thieves and spammers by preventing duplicated content from appearing in search results, which is known as a ‘penalty’. Websites can be further penalised if they continually fail to adhere to their quality guidelines. It aims to reward websites that consistently post fresh, interesting content, by making them appear higher in search results.

Barracuda Digital has created an algorithm tool which links up with your Google Analytics profile to display the difference in organic traffic after every major algorithm update. If you notice a steep decline in traffic after one of the red panda lines, then this means there is a high likelihood that issues have been detected by Google with the content of your website.

WHAT SHOULD I DO IF I BELIEVE MY WEBSITE HAS BEEN HIT BY PANDA 4.0?

If your website experienced a sudden drop in rankings at the end of May 2014, you could have been hit by a Google penalty as a result of the algorithm change. This means you could have been engaging in some spammy techniques to manipulate search rankings.

Fixing the penalty will not be a 5-minute job, so make sure you have plenty of time to investigate and fix the issues. Here’s my 10-step guide on the actions you can take to improve your website, so it can regain a good ranking in Google’s search engine.

1. OVER OPTIMISATION

Start by checking your title tags to ensure they are not stuffed with keywords: try to include 1 or 2 keywords, along with your brand name. They should not exceed the 487 pixel limit created by Google – use the title tag preview tool from Moz when re-optimising title tags.

Next, have a look to see if your website has the meta keyword tag implemented. The meta keyword tag is an additional html tag, which was used on websites in the past to show Google the terms you wished your website to rank for. If your website still has this tag, then it’s best to have it removed. Google does not use this tag as a ranking factor, and Bing actually uses it to downgrade websites in their search engine.

Finally, review the content on your web pages and ensure it has not been stuffed repeatedly with the same keywords. Look to include your targeted term no more than 3 / 4 times across the page, along with some closely related keyword terms. This will help you to rank one landing page for a variety of terms.

2. BROKEN LINKS

You should also audit your website for any broken internal links and make sure they are fixed. Broken links hinder the user experience of your website: if too many broken links or soft 404 errors are encountered by Google’s search spiders, this will work against you. There are a couple of tools which will help you easily identify broken links. I use the Screaming Frog SEO Spider, but you can also use Xenu, which does just as good a job.

3. UNIQUE META TAGS

Another issue which is easy to identify and fix is creating unique meta tags throughout your website. Each meta tag should be unique and clearly describe the content the user will find on that specific page. The same keywords and descriptions should not be used across multiple pages.

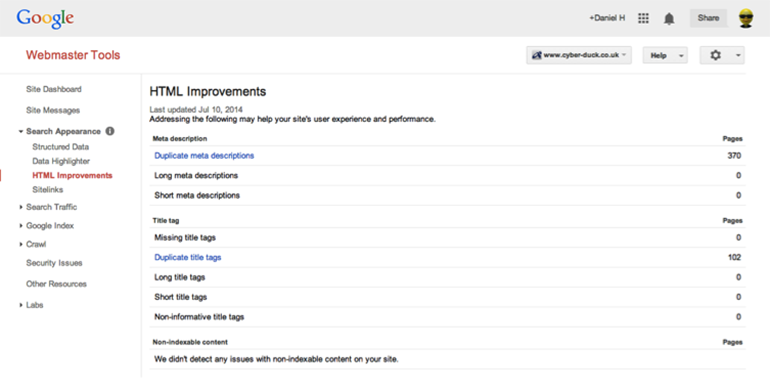

To identify pages with duplicate meta data, you can log in to your webmaster tools account and click search appearance -> html improvements for a full list. If you do not have a webmaster tools account, then I suggest you set one up, but the Screaming Frog SEO Spider can also be used for this task.

The HTML improvements page in Webmaster Tools helps to identify improvements that can be made to your meta tags.

4. ADS ABOVE THE FOLD

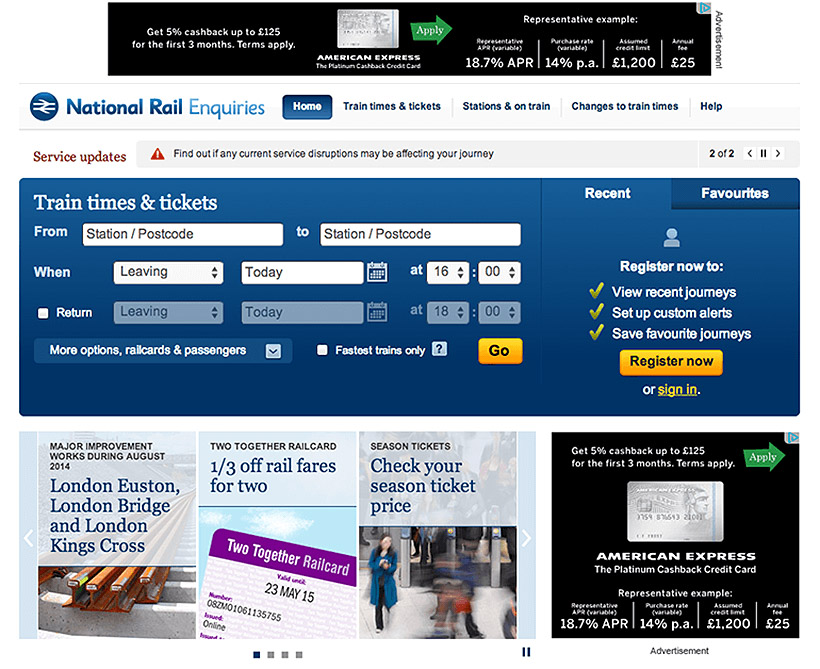

If you do not have any advertising present on your website, then please skip to section 5. If you do, then you will want to examine the amount of ad space you are providing to your advertisers. If the size of your advertising banners is so big that you’re making the user scroll to find the content they are looking for, then this may contribute towards a penalty. In the example below, we can see how the National Rail website uses a minimum amount of advertising space, which would prevent them from receiving any penalty.

The National Rail website uses an optimal amount of ad space on the homepage of their website.

If you are currently trying to recover from a Panda penalty, then I would advise you to remove all banners from your website until the penalty is removed.

5. LOW CLICK-THROUGH RATES

There is a variety of statistical data that Google will use to determine the quality of the content on your website. One of those is the click through rate (CTR). If the CTR of your website is abnormally low, then this raises a red flag to Google’s web spam team that the content is of low quality.

The current CTR of your website can be found by logging in to your webmaster tools account and clicking search traffic -> search queries. You will then be presented with all the terms used to access your website, and the CTR your website received for those terms. You can then search for these terms in Google and find your website, so you can identify the specific landing page with a low CTR.

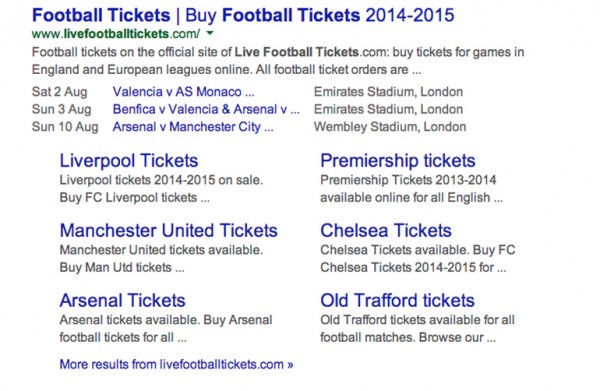

One of the easiest ways to improve CTR of your website is to mark up your web content using schema.org and to ensure your blog has Google authorship implemented. Once this additional mark up is added to your website, extra bits of information found on your website will be pushed in to Google’s search results which will help to increase the CTR of your website. Unique meta tags can also help – skip back to section 3 for our tips here.

The Live Football Tickets website demonstrates how additional microdata markup can improve the appearance of your website within the SERPs.

6. USE THE REL=CANONICAL TAG

If you are creating some content and a large portion of it is duplicated from another resource on the web (which can be either your own website or an external website), then you need to make sure you are using the rel=canonical tag. This tag references where the original content comes from to Google and will prevent you from being penalised.

7. CONTENT ERRORS

You will also need to regularly review your website to ensure there are no spelling or grammatical errors within your content. If Google continues to identify these issues then this will contribute towards a penalty. All information on your website should be up to date with the latest trends and developments within your industry.

8. WEBSITE ARCHITECTURE

Having a clear and logical flow to the structure of your website will not only help users to navigate between pages, but improve the indexation of your website. This is because search engine spiders will be able to easily navigate through your website, and identify any changes.

At Cyber-Duck, we generally suggest the following improvements to the architecture of a client website:

- Implement a breadcrumb trail on all landing pages of your website. A breadcrumb trail is a navigational aid which allows users to keep track of their location within your website, and it also has the additional SEO benefit of improving the internal link structure of the website

- Include no more than 100 links per landing page

- Ensure you use a clean / crisp navigation on your website. Our UX experts always recommend implementing a top navigation over a left navigation

9. PAGE SPEED

It’s been long known that the speed of your website will have an impact on your search engine rankings: Search Engine Land include this as a ranking factor in their periodic table of SEO.

I recommend you use Google PageSpeed, a handy tool which has recently been updated to show how you can improve the speed of your website, as well as recommendations on how to improve the user experience of your website on mobile.

10. DUPLICATE OR THIN CONTENT

Finally, you will want to identify if there are any issues with duplicate content on your website. Duplicate content can be internal, as well as external content. This means it is not advised to use the same content across multiple pages on your website.

INTERNAL CONTENT

This is only an issue if users are accessing the exact same page from two different URLs on your website. If this is the case, then any of the following changes could be applied to resolve the issue:

- 301 Redirect: set up a permanent redirect from all duplicate pages to the original landing page. This is normally the best option, as all the SEO value gained on the duplicate pages will be passed on to the original.

- Canonicalisation: as mentioned in section 6, you can use the rel=canonical tag to reference the original source of content either on your own website, or from external resources.

- Robots.txt file: if you are creating a new landing page and are knowingly duplicating the content from elsewhere, then you may want to reference the duplicated content in your robots.txt file to stop Google from indexing the page.

EXTERNAL CONTENT

Here it should be noted that Google will only penalise the individual landing pages with duplicated content, rather than the entire domain. The only way you could receive a domain wide penalty would be if copied content is spammy, or stuffed with keywords.

However, Google will look to rank the original author, and drop any pages containing duplicate content. This is because they do not want to show the same content multiple times within their search results. With this in mind, it’s firstly important to make sure that all the content you are producing is unique. Then you should do all you can to ensure Google recognises you as the original author. This can be done by:

- DMCA Request: You can submit a DMCA request, which will inform Google that you are the original author. Here, any other ‘author’ does have the option to dispute your request. However, if they admit to copying the content, then their article will be dropped in favour of your article, as the original.

- Google Authorship: if this is implemented on your blog, the search engine will index the content much more quickly. This helps you to be recognised as the original author, as Google crawled the content there first.

Conclusion

If you believe your website has been hit by a Google penalty, then I would strongly advise that you stop all your current SEO activity, and focus on removing it. Overturning a penalty can be a lengthy task, and you will not see any substantial increase in traffic until it is fixed. Our 10-step guide shows you where to start, but if you require some additional help, then get in touch with Cyber-Duck and see how we can help push your sales back to their normal numbers.